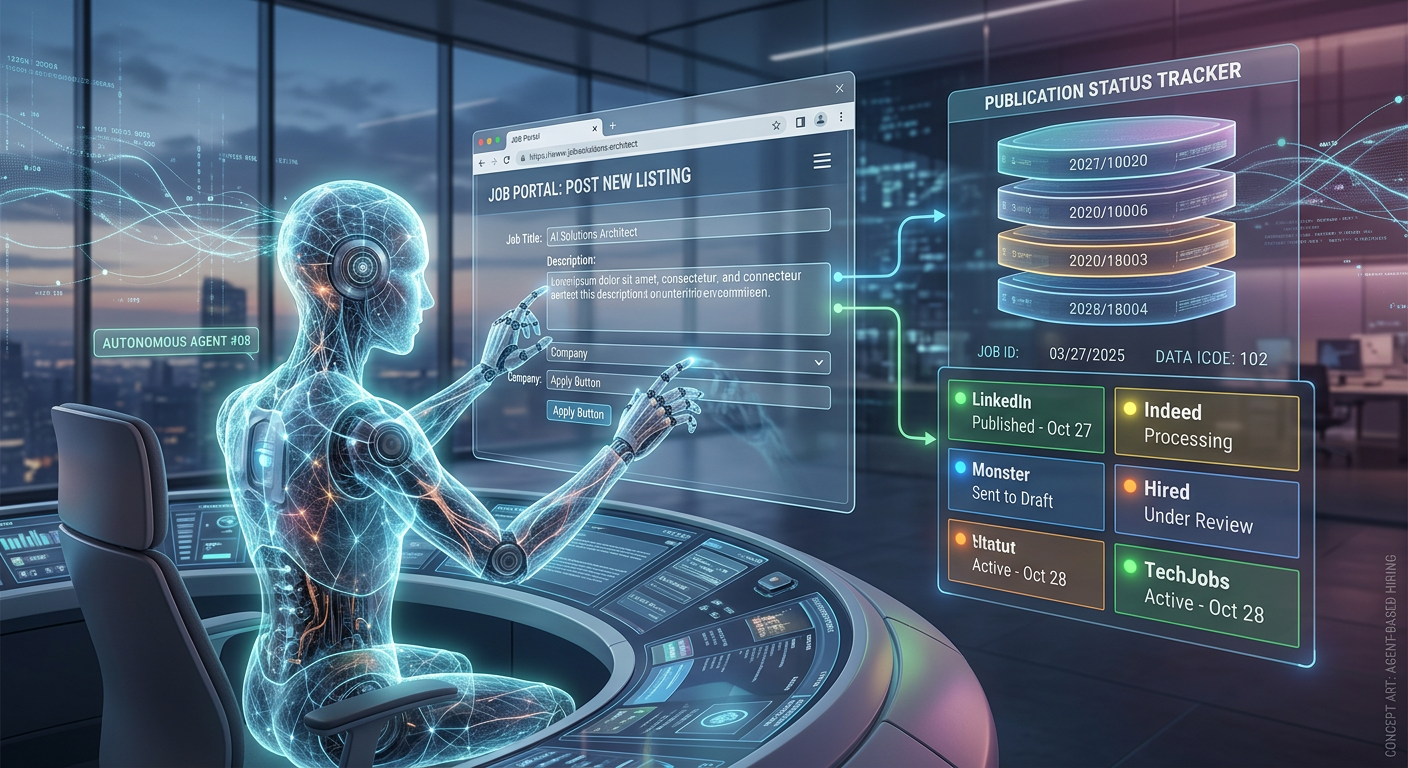

AI Agents That Post and Remove Job Listings Autonomously

What happens when you replace brittle web scraping with an AI that sees and navigates job portals like a human would.

The problem: Job portals without APIs

Job boards are essential for reaching candidates, but most of them especially regional portals do not offer APIs. Publishing a job listing means logging into a web portal, filling out a multi-step form, uploading details, and clicking publish. Removing a listing means logging back in, finding the job, clicking delete, confirming through modal dialogs. For every portal. For every job.

Automating this with traditional tools means writing scripts that target specific HTML elements click this button with this CSS class, fill this input with this ID, wait for this div to appear. It works until the portal changes a class name, moves a button, or adds a popup. Then the script breaks silently, and jobs either fail to post or fail to come down.

The fragility runs deep:

- Hardcoded selectors break constantly - any change to the portal's front-end markup invalidates the automation. A minor redesign can take down the entire posting pipeline.

- Browser automation inside workflow engines is risky - running a full headless browser inside n8n consumes memory and CPU, threatening the stability of every other workflow on the same instance.

- Duplicate postings and orphaned listings - without proper status tracking, a job can be posted twice to the same portal, or remain live long after it should have been closed.

- Every new portal means new code - each job board has a different layout, different form structure, different confirmation flow. Supporting a new portal means writing and maintaining an entirely new set of selectors.

We took a fundamentally different approach.

The agentic approach

Instead of writing scripts that target HTML elements, we built a system where an AI agent visually navigates job portals the same way a person would reading the page, finding the right buttons, filling in the fields, and confirming the action. The agent does not depend on CSS selectors or DOM structure. It sees the interface and reasons through it.

The system manages the full lifecycle of a job listing: when a recruiter marks a job as "Publish" in the database, the agent logs into the portal and posts it. When the job is marked "Closed", the agent logs back in, finds the listing, and removes it. The recruiter never touches the portal directly.

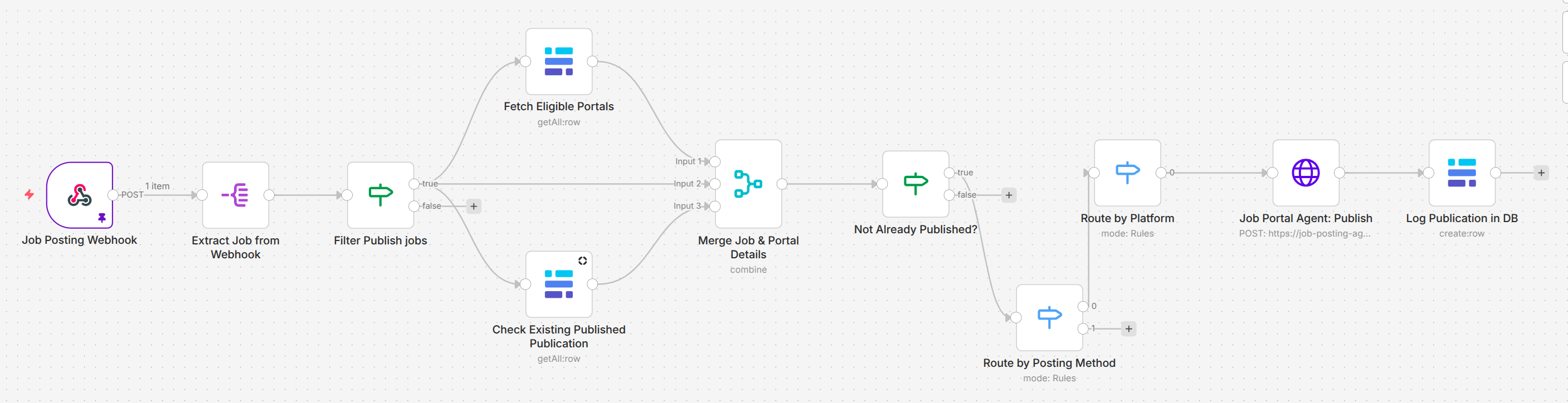

Image 1 - The job posting workflow

Image 1 - The job posting workflow

Three components work together:

A database that tracks every job, portal, and publication

Three Baserow tables form the system's source of truth. The Jobs table holds every position with its details and status. The JobPortals table stores credentials and configuration for each supported portal. The JobPublications table records every publication event which job was posted to which portal, when, its current status, and the public URL.

This structure means the system always knows the state of every listing. Before posting, it checks whether the job is already live on that portal. Before removing, it confirms there is an active publication to take down. No duplicates. No orphaned listings.

An AI agent that navigates portals autonomously

The core of the system is a browser-use AI agent powered by GPT-4o and Playwright, running as a standalone microservice on Google Cloud Run. When called, the agent receives the job details and portal credentials, launches a browser, and autonomously navigates the portal logging in, filling out the posting form, and publishing the job.

For removal, the same agent logs in, scrolls through the employer dashboard, locates the specific listing by ID or title, clicks delete, and confirms through any modal dialogs. If the listing has already been manually removed, the agent recognizes this and returns success gracefully.

The critical difference from traditional automation: the agent does not rely on hardcoded selectors. If the portal changes its layout, moves a button, or adds a new confirmation step, the agent adapts because it is reading the interface visually and reasoning about what to do, not following a fixed script.

An orchestration layer that ties it all together

n8n handles the logic, routing, and database operations. When a job's status changes in Baserow, a webhook triggers the appropriate workflow. The system fetches portal configurations and existing publication records in parallel, merges the data, validates that the action is needed (preventing duplicate posts or redundant removals), calls the agent microservice, and updates the publication record with the result.

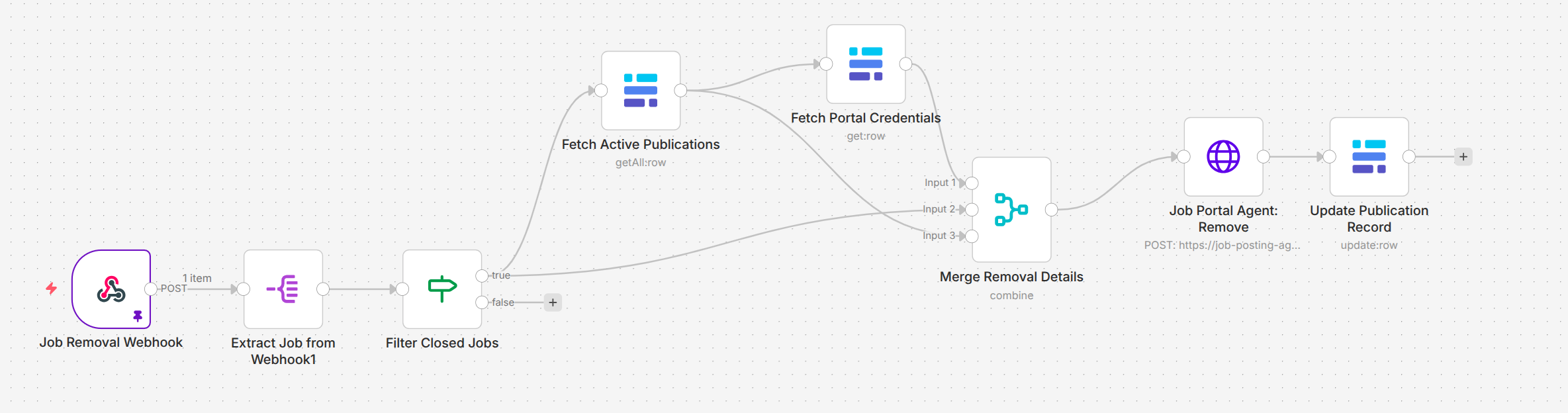

Image 2 - The job removal workflow

Image 2 - The job removal workflow

The recruiter's experience is simple: change a status field in Baserow. Everything downstream portal navigation, form filling, confirmation, record-keeping happens automatically.

How the system fits together

The architecture separates concerns deliberately. n8n handles orchestration and data. The AI agent handles browser interaction. Baserow holds the state.

Webhook trigger and validation - When a recruiter changes a job's status in Baserow, a webhook fires. The workflow extracts the updated job record and checks whether the status actually changed to "Publish" or "Closed" preventing false triggers from minor edits like fixing a typo in the job description.

Parallel data retrieval - For posting, the workflow simultaneously fetches all active portals matching the job's country and checks the JobPublications table for existing records. For removal, it fetches active publications for the job and retrieves the corresponding portal credentials. This parallel approach keeps the workflow fast.

Duplicate and state protection - Before posting, the system confirms no active publication exists for this job on the target portal. Before removing, it confirms an active publication exists to remove. This layer prevents the most common failure modes: double-posting a job or attempting to remove a listing that is already gone.

Agent API call - The validated payload is sent via HTTP POST to the microservice running on Google Cloud Run. The agent launches a browser, executes the task, and returns the result including the public job URL and portal-specific ID for new postings, or a confirmation of successful removal.

Database update - On success, n8n writes the result back to Baserow. For new postings, a row is created in JobPublications with status "Published" and the public URL. For removals, the existing row is updated to "Removed" with a timestamp.

What this enables

The system is built and ready for production use on regional job portals starting with the Nigerian job board Jobslin.

The shift from selector-based automation to an agentic approach changes what is possible:

- Portal changes do not break the system - because the agent navigates visually rather than by CSS class names, front-end updates to the job board do not require code changes on our side.

- The browser engine runs outside n8n - isolating the heavy Playwright browser in a dedicated Cloud Run container protects n8n's stability. The workflow engine handles logic; the microservice handles browser work.

- Publication state is always known - every posting and removal is tracked in Baserow with timestamps and status. No listing exists in an unknown state.

- New portals become a configuration task, not a coding project - adding a new job board means adding a system prompt and route to the microservice, not writing and maintaining a new set of brittle selectors.

- The recruiter's workflow stays simple - they change a field in a table. The system handles everything else. The agentic architecture is completely invisible to the end user.

What comes next

The current system covers the full post-and-remove lifecycle on a single portal. Several extensions would broaden its reach:

- Multi-portal expansion - onboarding additional job boards across different markets. Each new portal requires a new system prompt in the microservice, not new automation code making expansion significantly faster than the traditional approach.

- Agent prompt optimization - monitoring execution logs to identify where the agent takes unnecessary steps or gets confused by unexpected UI elements, then tightening the prompts to enforce the shortest, most efficient navigation path.

- Listing verification - periodically checking that published listings are still live on the portal, catching cases where a portal removes a listing for policy reasons without notifying the system.

Key takeaway

Most job portals will never offer APIs. That has traditionally meant a choice between manual work and brittle selector-based automation that breaks with every front-end update. The agentic approach opens a third path: an AI that navigates the portal the way a person would, but does it consistently, automatically, and at scale.

The system does not fight the portal's interface it works with it. When the portal changes, the agent adapts. When a new portal needs to be supported, it gets a new prompt, not a new codebase. That is the difference between automation that works until it breaks and automation that keeps working.