How We Built an AI-Powered EU Funding Call Selection Tool

Replacing guesswork with data-driven insights for Horizon Europe and beyond

.png)

The problem: Too many calls, not enough clarity

The European Union invests massively in research and innovation. Horizon Europe alone has a budget of €95.5 billion from 2021 to 2027, with approximately €7.3 billion allocated in 2025 - including roughly €1.6 billion specifically targeting artificial intelligence. Across programmes like Digital Europe and the European Defence Fund, hundreds of funding calls are open at any given time, each with its own scope, budget, and consortium rules.

For organizations looking to apply, the selection process is critical — and surprisingly manual. Getting it right means investing months of proposal writing into a call with a realistic chance of success. Getting it wrong means wasting that effort on a poor match, or worse, leaving funding on the table because the perfect call was buried in the noise.

In practice, the process typically looks like this:

- Hours of manual reading - browsing the EU Funding & Tenders Portal, opening call after call, trying to assess relevance.

- Guesswork about competitiveness - no easy way to know how many organizations will apply, or what the historical success rate looks like for similar topics.

- No structured way to compare - call data is spread across individual portal pages with no centralized, filterable view.

- Institutional knowledge stays informal - lessons from past applications live in people's heads, not in a reusable system.

We set out to change that.

What we built

The EU Funding Call Intelligence & Selection Assistant is a decision-support tool that automates the collection, structuring, and analysis of EU funding calls. It gives teams a clear, data-backed view of which opportunities are most worth pursuing and why.

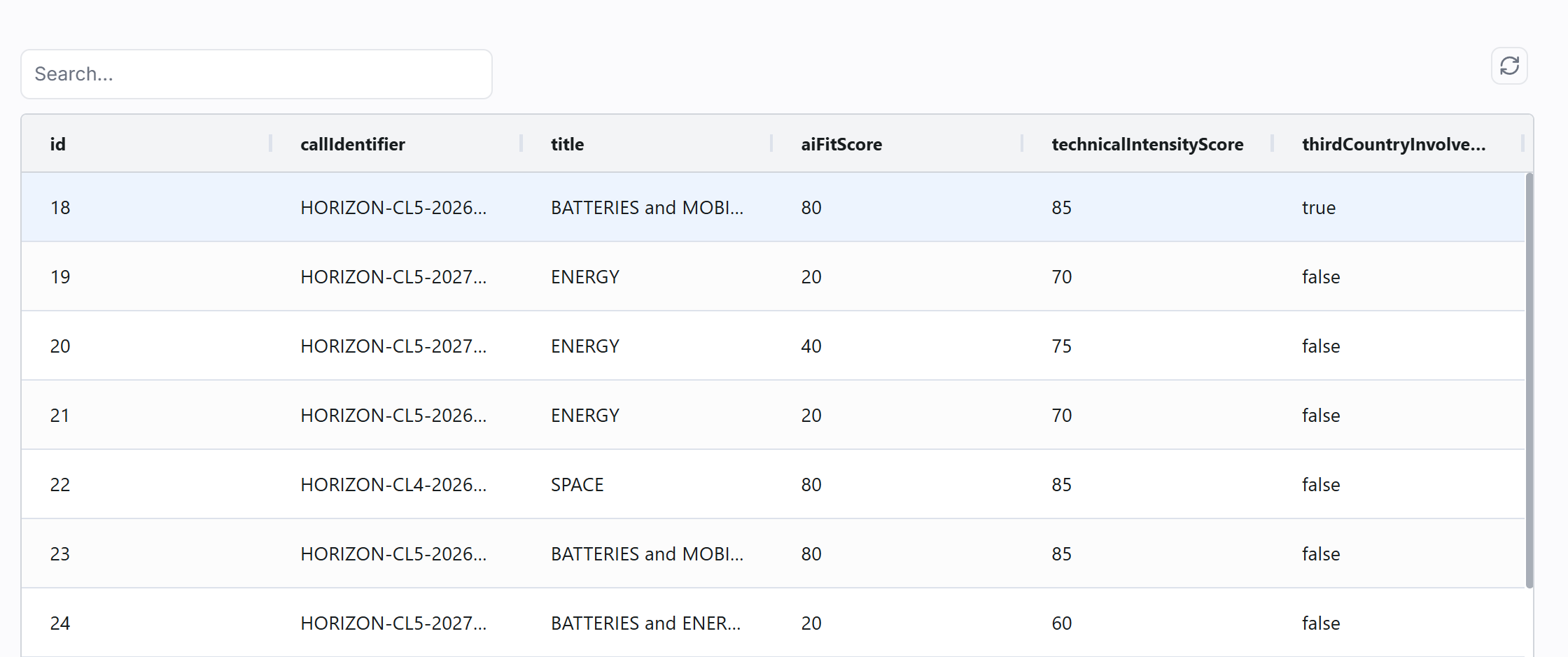

The main interface presents all open and forthcoming calls in a searchable, sortable table — each row showing the call identifier, title, AI fit score, technical intensity score, and third-country involvement at a glance.

Image 1 - The calls table

Image 1 - The calls table

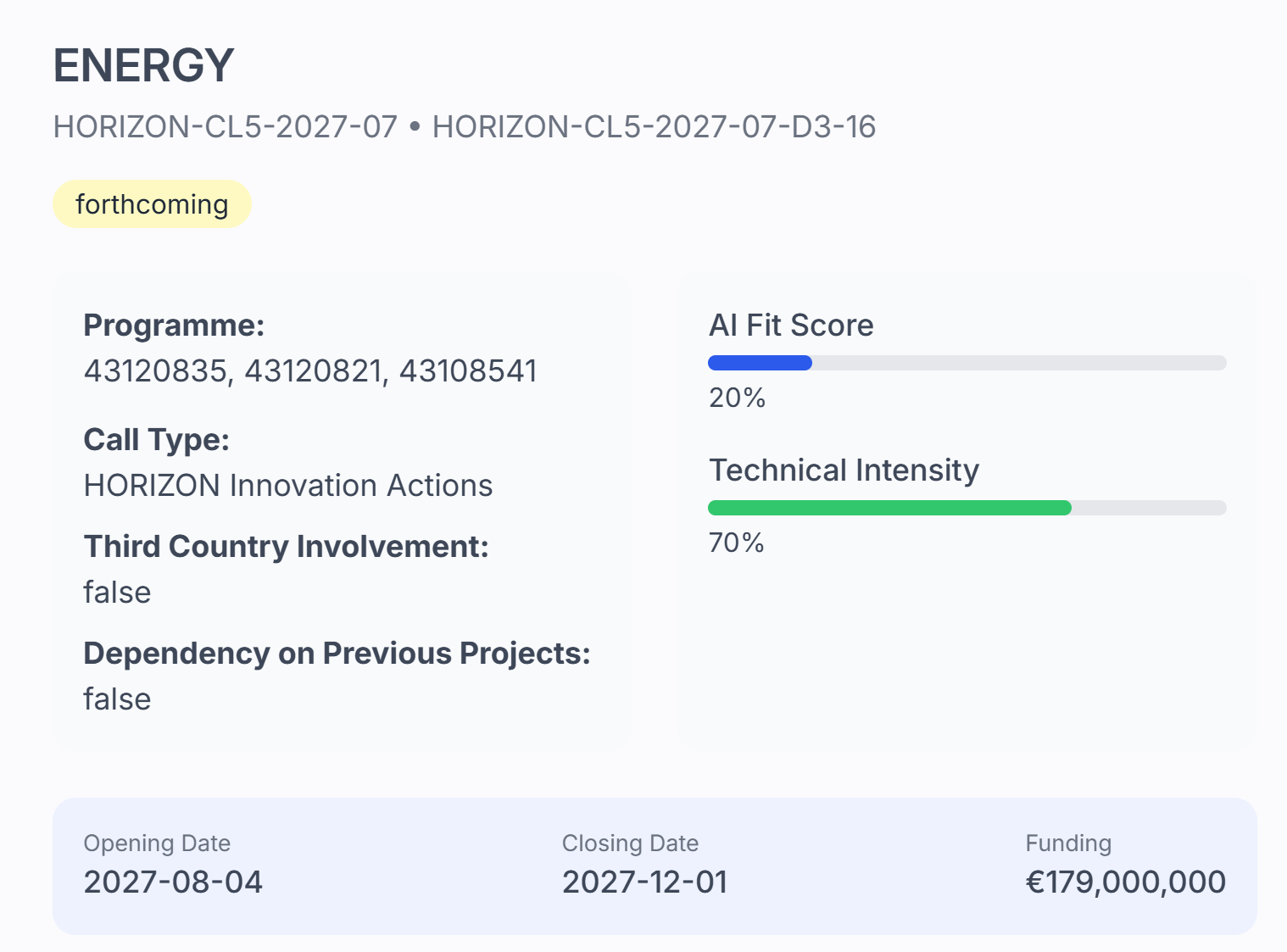

Selecting any call opens its detail panel, where the AI-generated analysis is displayed: programme info, call type, AI fit and technical intensity scores, dependency flags, and key dates.

Image 2 - Call detail view

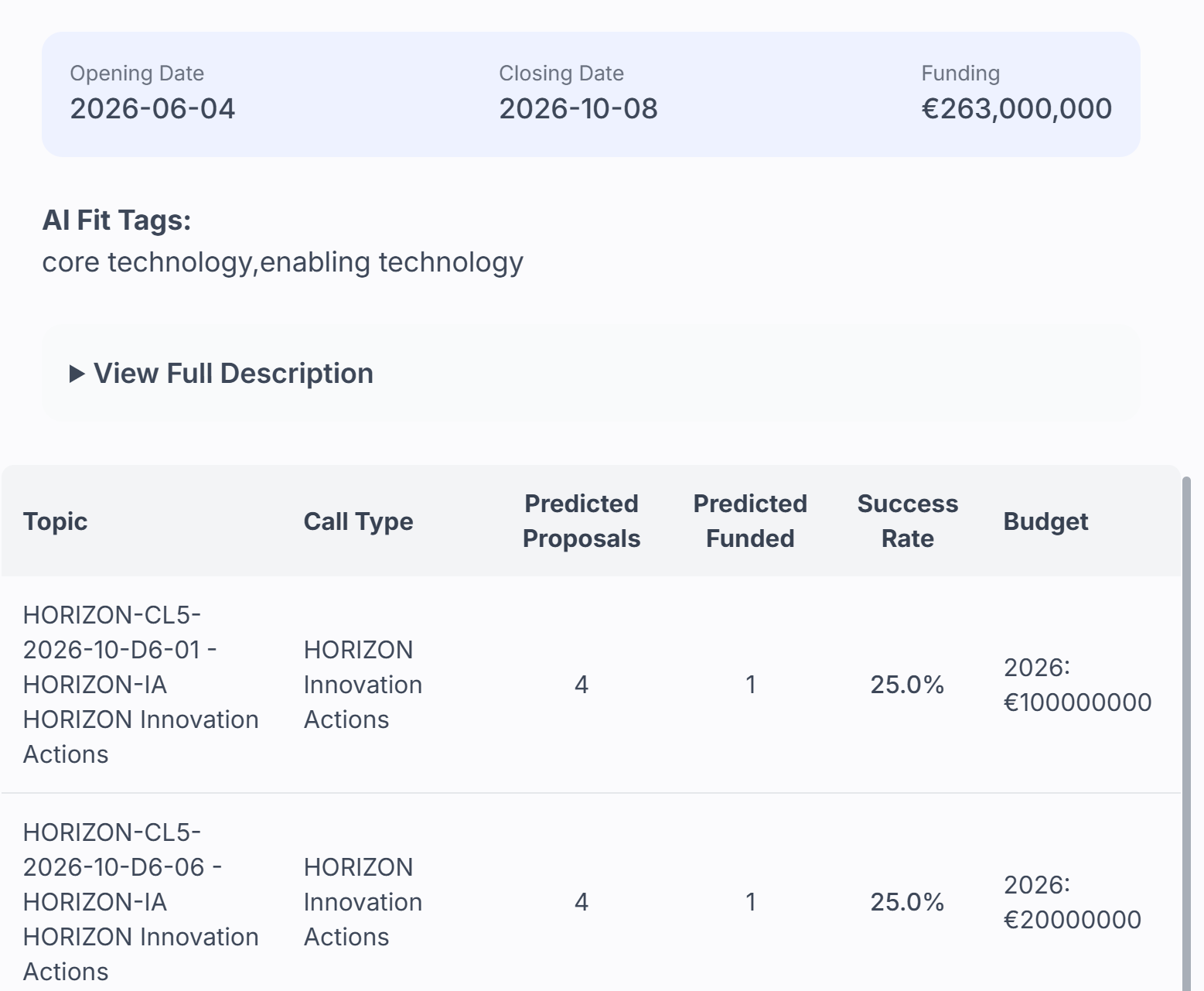

Scrolling down within the detail panel reveals the competitiveness predictions: for each topic in the call, the system shows the predicted number of proposals, predicted funded count, estimated success rate, and budget — all calculated from similar historical calls.

Image 2 - Call detail view

Scrolling down within the detail panel reveals the competitiveness predictions: for each topic in the call, the system shows the predicted number of proposals, predicted funded count, estimated success rate, and budget — all calculated from similar historical calls.

Image 3 - Competitiveness predictions per topic

The tool does three things:

Image 3 - Competitiveness predictions per topic

The tool does three things:

1. Collects and structures call data automatically

A daily automated pipeline connects to the European Commission's Search API and extracts every newly published call. For each one, it captures:

- Programme, Call ID, and topic

- Call type and current status (open, forthcoming, or closed)

- Funding amount and budget breakdown per topic

- Opening and closing dates

- Keywords and thematic tags

- Full call description

- Evaluation results (for closed calls) - including how many proposals were submitted and how many received funding

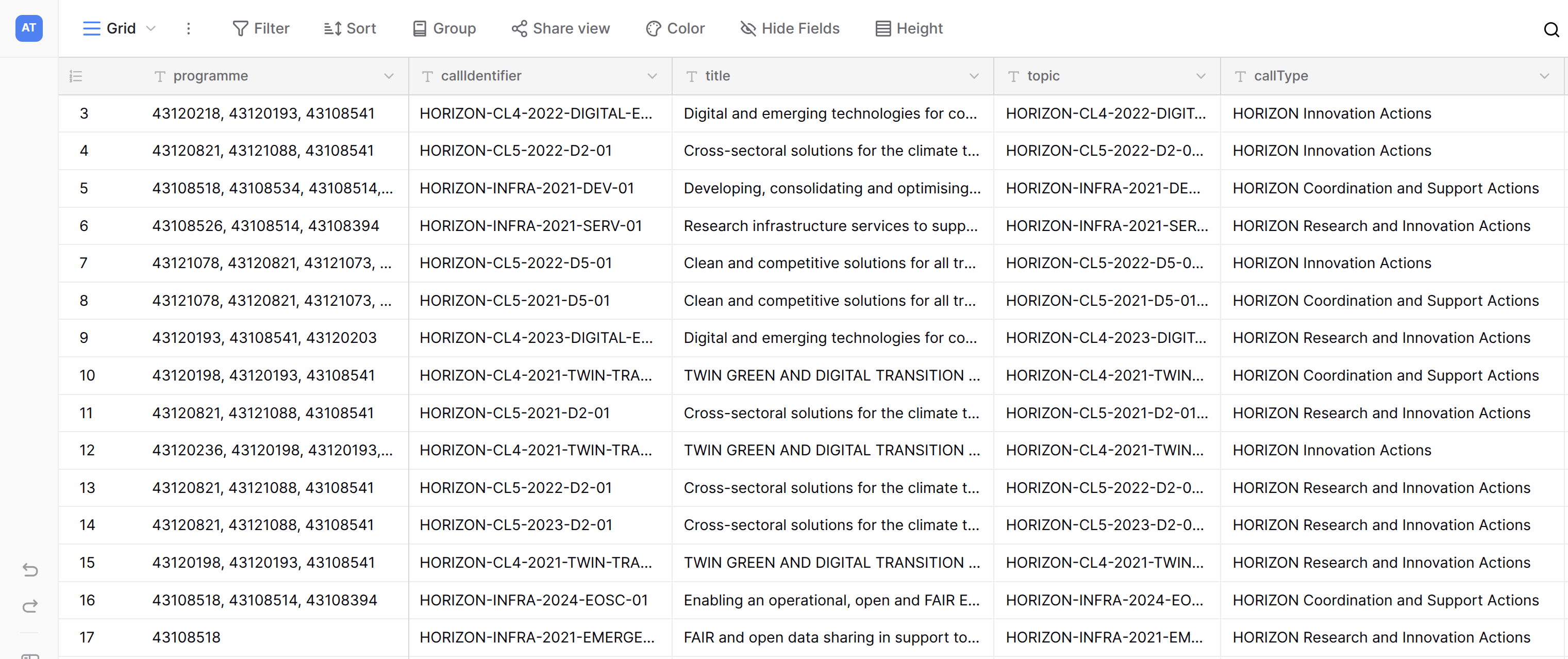

All of this is stored in a structured, searchable database. Instead of browsing portal pages one at a time, users can now filter, sort, and compare calls in seconds.

Image 4 - Structured Calls Database

Image 4 - Structured Calls Database

2. Generates AI-powered insights for every open call

For each open or forthcoming call, the system uses an AI model to assess several dimensions that matter when deciding whether to apply:

| Insight | What it tells you |

| AI Fit Score (0–100) | How relevant the call is to artificial intelligence work |

| AI Fit Tags | Role of AI in the call: core technology, enabling technology, data/analytics support, or evaluation/monitoring |

| Technical Intensity Score (0–100) | Technical depth and domain complexity helps gauge whether it matches the team's capabilities |

| Dependency on Previous Projects | Whether the call requires building on prior EU-funded work |

| Third Country Involvement | Whether the call involves partners outside the EU |

These insights are generated automatically by analyzing each call's title, description, keywords, and tags - no manual review required.

3. Predicts competitiveness using historical data

This is where the tool becomes a strategic advantage. For every open call, the system:

- Finds the 10 most similar closed calls from a vector store using semantic similarity (OpenAI's text-embedding-3-large model with cosine similarity in MongoDB Atlas).

- Groups the matched historical topics by call type.

- Calculates the median number of proposals submitted, median number funded, and the estimated success rate for each topic.

The result: a concrete, data-backed prediction of how competitive each topic is likely to be before the deadline arrives. Teams can answer questions that were previously impossible to answer with confidence: Will this call attract 50 proposals or 500? Is the success rate typical for this type of call, or an outlier?

How it works under the hood

The system integrates several specialized platforms, each handling what it does best.

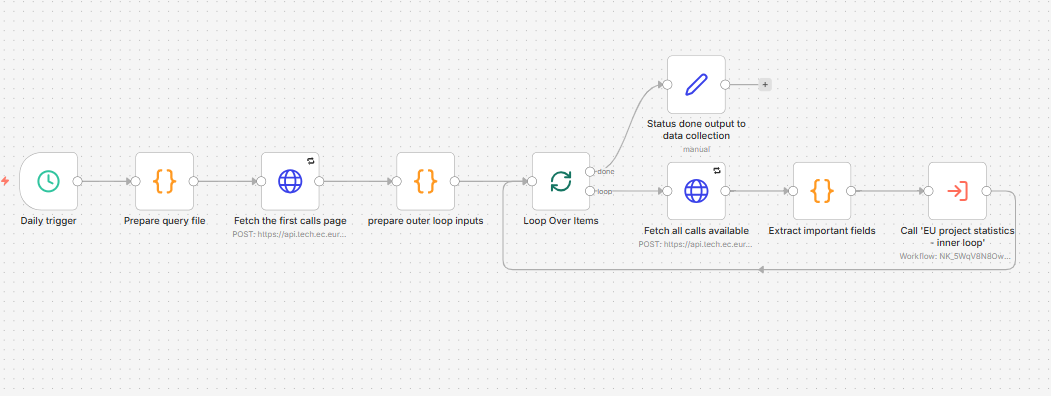

- Data collection - n8n orchestrates the daily pipeline. The main workflow fetches all calls published that day from the EU Commission's Search API, handles pagination, extracts relevant fields, and writes records to Baserow.

Image 5 - Data Collection Workflow

Image 5 - Data Collection Workflow - Structured storage - Baserow hosts two main tables: one for raw call data and one for AI-generated insights. The schema captures all fields needed for both filtering and analysis.

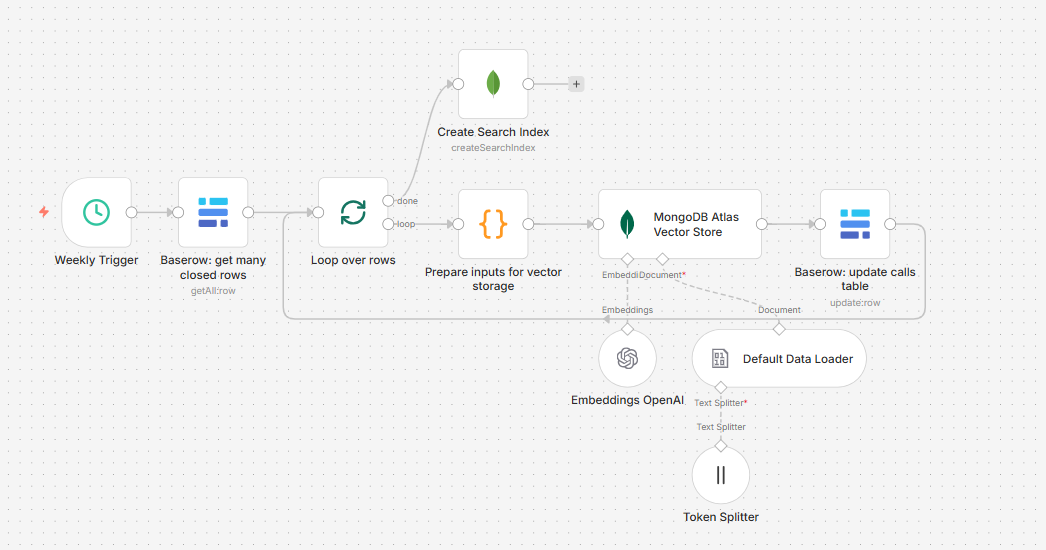

- Vector search - A weekly workflow stores closed calls with completed evaluation results in a MongoDB Atlas vector store using 3072-dimensional OpenAI embeddings. This creates a growing, searchable archive of historical outcomes.

Image 6 - Vector Storage Workflow

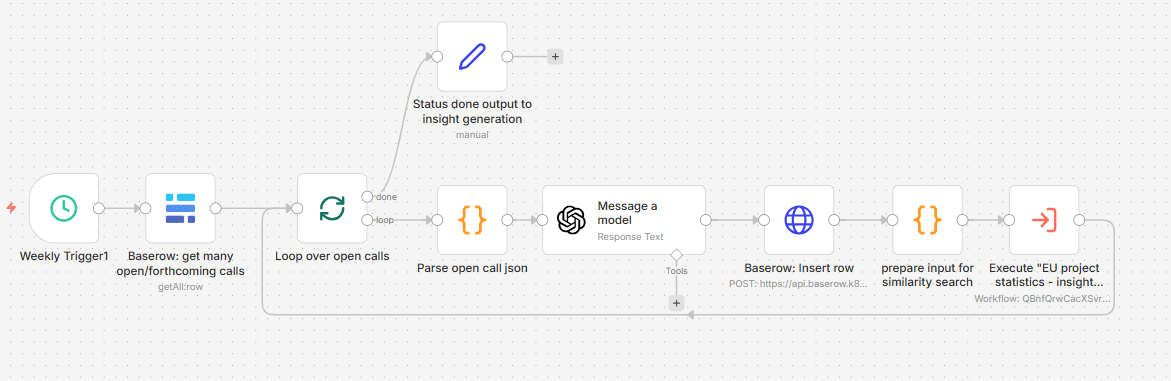

Image 6 - Vector Storage Workflow - Insight generation - A second weekly workflow processes open and forthcoming calls: GPT-4o-mini generates the AI fit scores and tags, then the system retrieves similar historical calls and calculates competitiveness predictions.

Image 7 - Insight Generation Workflow

Image 7 - Insight Generation Workflow - Data synchronization - A daily workflow keeps the insights table current: closed calls are removed, forthcoming calls that have opened get their status updated.

- User interface - A Windmill-based application provides an interactive front-end with an infinite-scroll table, built-in search and column sorting, and a detail panel showing the full set of insights when a row is selected.

The impact

The tool is deployed in production and actively used for funding call selection.

- Before: A team member spends hours each week manually browsing the EU portal, reading call descriptions, and maintaining spreadsheets. Selection is based on experience and gut feel. Historical patterns go unrecorded.

- After: The team opens a single interface, filters by programme or AI relevance, checks predicted competitiveness, and focuses effort on the calls with the best strategic fit. Every decision is backed by data.

What changed:

- Review time dropped dramatically - structured filtering replaces hours of manual portal browsing.

- Prioritization is data-informed - AI fit scores and competitiveness predictions give teams a quantified basis for decisions.

- Institutional knowledge compounds - every closed call entering the vector store makes future predictions more grounded.

- Every insight is transparent - scores and predictions trace back to the underlying data, so teams can interrogate the reasoning.

What comes next

The current system is a solid foundation. Potential extensions include:

- Deeper similarity matching - fine-tuning the vector search to account for how topics evolve across programme generations.

- Consortium intelligence - incorporating data about past winning consortiums to help identify potential partners.

- Proposal drafting support - using structured call data and historical patterns to generate proposal outline suggestions.

- Expanded programme coverage - extending the pipeline beyond Horizon Europe to other EU funding programmes.

Key takeaway

The EU Funding Call Intelligence & Selection Assistant shows what becomes possible when you replace manual browsing with structured data, and intuition with statistical analysis. It is not about removing human judgment; it is about giving that judgment better inputs.

By combining automated data extraction, structured storage, vector-based similarity search, and AI-assisted analysis, we turned a labor-intensive, experience-dependent process into a scalable, evidence-based decision-support tool.